What is MCP? The Protocol That Unlocks AI Agent Potential

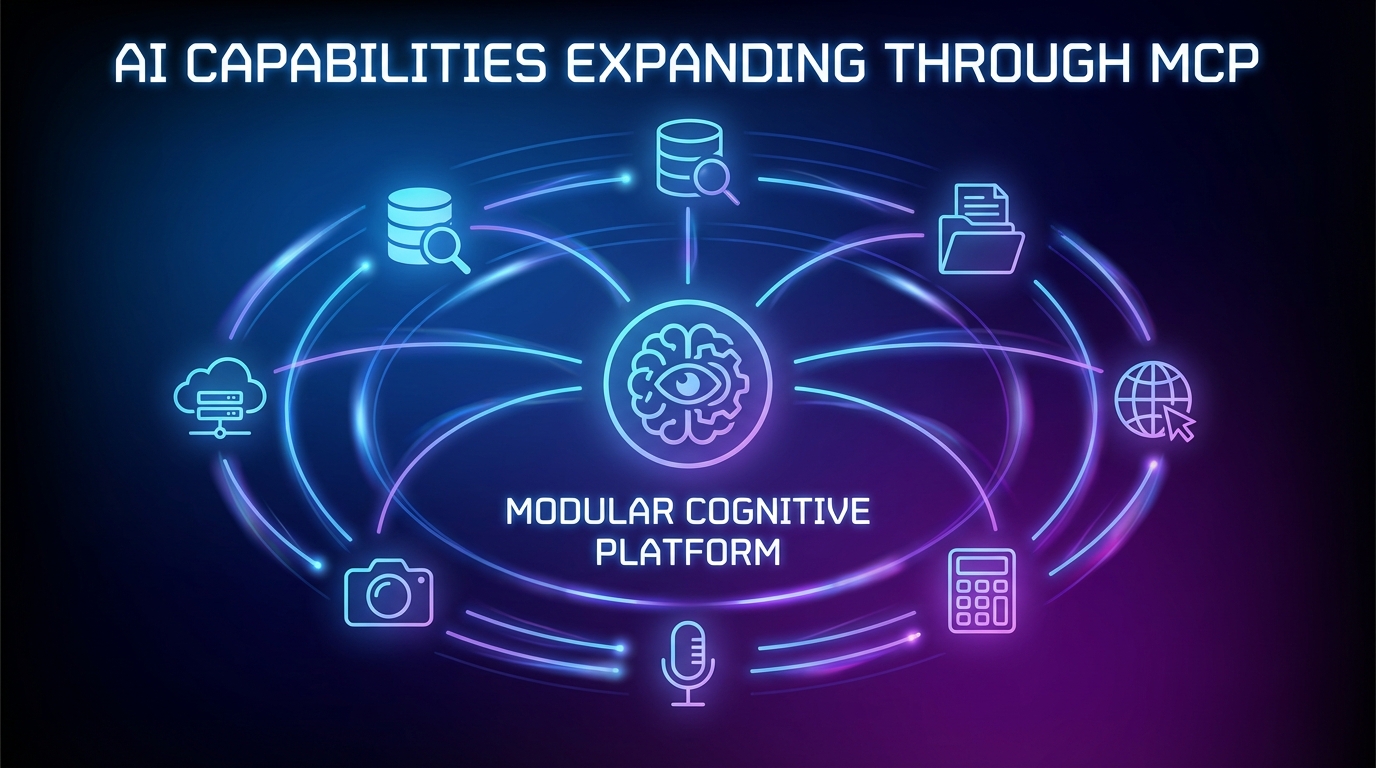

Imagine giving an AI agent the ability to not just talk, but to act—to query databases, read files, call APIs, browse the web, and execute code. Model Context Protocol (MCP) makes this possible by providing a standardized, open protocol that connects AI agents to external tools and data sources.

Before MCP, every AI application needed custom integration code for each tool or data source. Want your agent to access a database? Write a custom connector. Need it to read from a file system? Build another integration. This resulted in fragmented, non-reusable code and severely limited what AI agents could accomplish.

MCP solves this by establishing a universal client-server protocol where AI agents (clients) can discover and invoke capabilities exposed by MCP servers. Think of it as USB for AI agents—one standardized interface that works with countless tools and services.

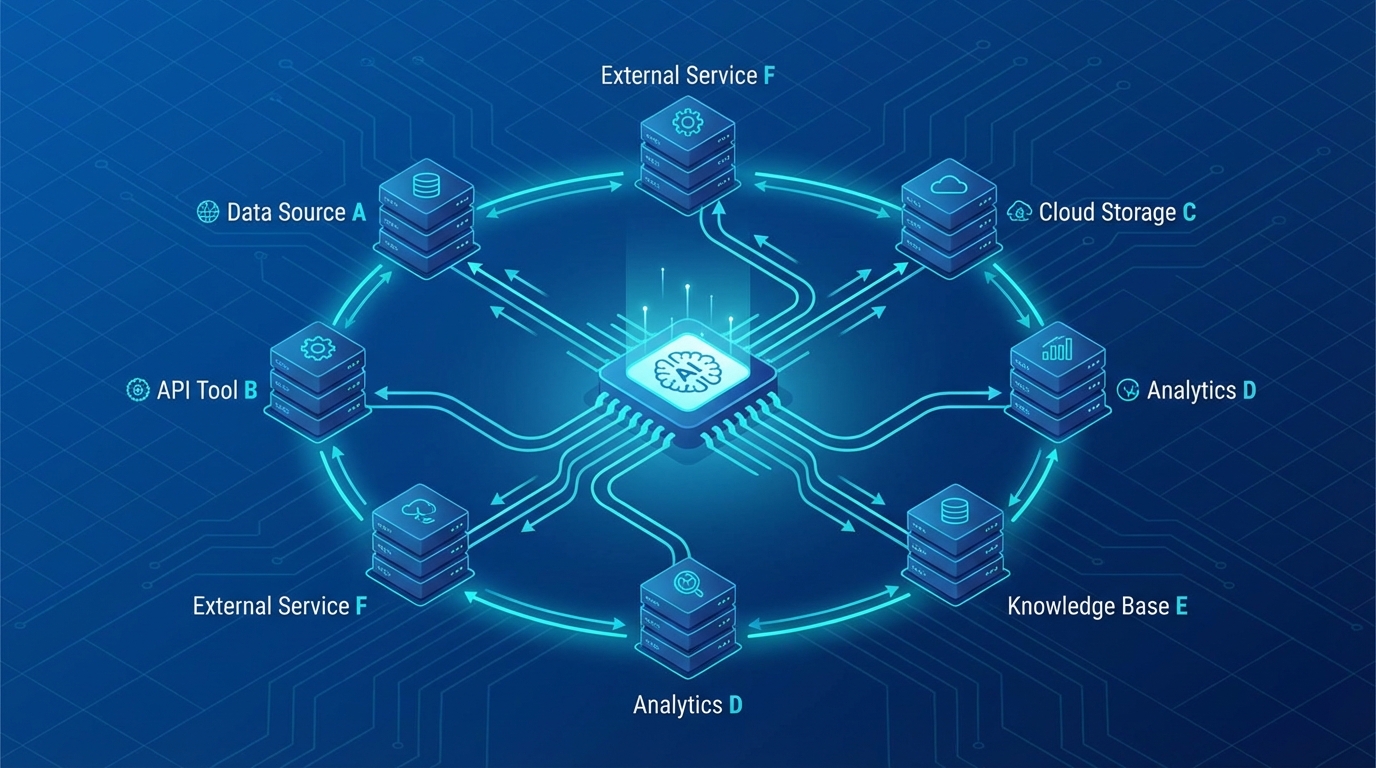

How MCP Connects Agents to Servers

The AI agent (center) communicates with multiple MCP servers through standardized connections, exchanging data packets in real-time.