AI Hallucination Detector

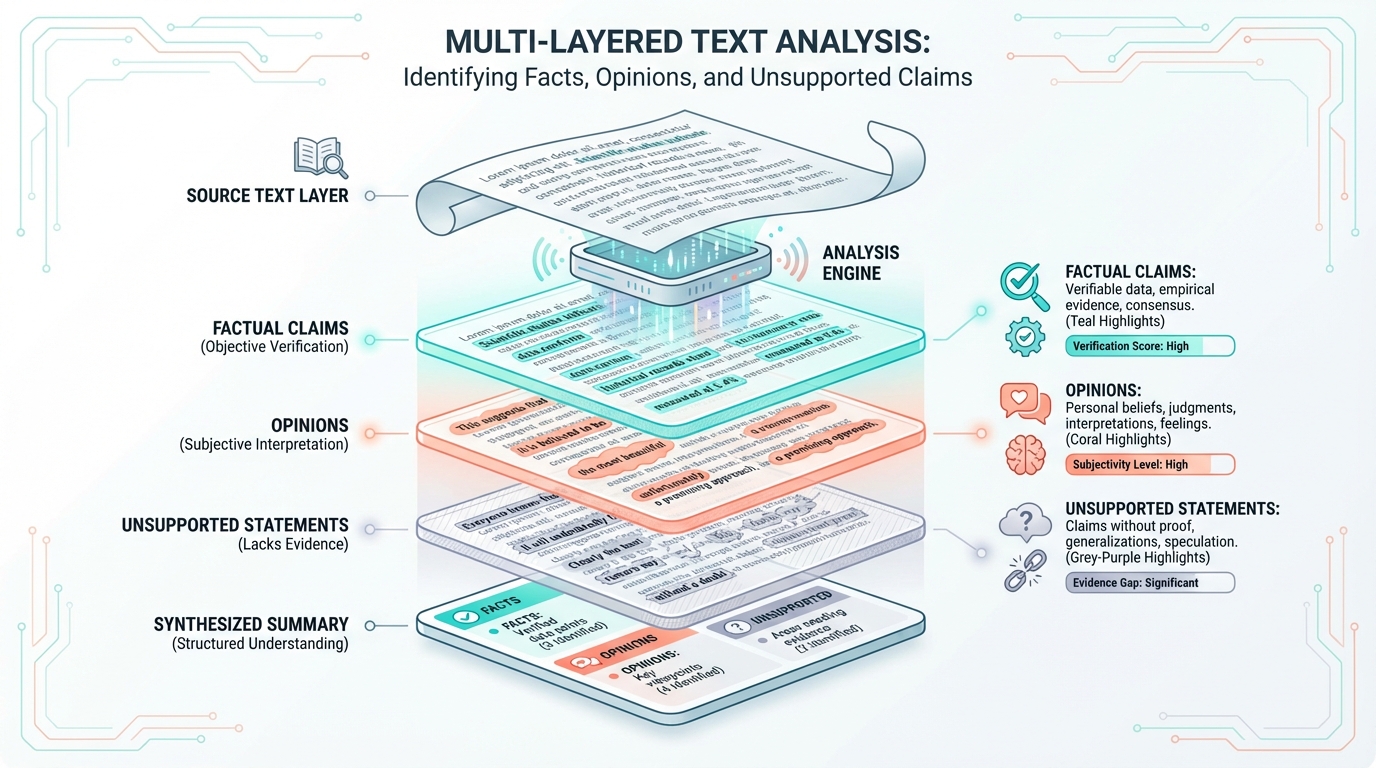

Identify false claims, verify factual accuracy, and detect unsupported statements in AI-generated content with our advanced analysis tool

Identify false claims, verify factual accuracy, and detect unsupported statements in AI-generated content with our advanced analysis tool

Paste any text below to check for potential false claims, unsupported statements, and logical inconsistencies

Understanding the phenomenon of AI-generated false information

AI models sometimes generate information that sounds plausible but is completely invented—like citing research papers that don't exist or creating fictional historical events.

Models may mix real facts with incorrect details, such as attributing accurate quotes to the wrong person or combining elements from different events into a single narrative.

AI can create spurious relationships between unrelated concepts, presenting correlations as causation or linking events that have no actual historical connection.

Models may confuse dates, timelines, and chronological sequences, placing events in wrong time periods or misrepresenting when discoveries or inventions occurred.

AI can generate convincing-sounding statistics and numbers that have no basis in reality, complete with percentages and figures that seem authoritative but are invented.

Models frequently misattribute quotes, discoveries, or achievements to the wrong individuals, or invent contributions by real people who never made them.

As artificial intelligence becomes increasingly integrated into information retrieval and content generation, understanding AI hallucinations has become crucial for anyone working with or relying on AI-generated content. These aren't simple errors—they're a fundamental characteristic of how large language models operate, making them particularly insidious because the false information is often presented with the same confidence as accurate facts.

AI hallucinations stem from the fundamental architecture of large language models. These systems don't "know" facts in the way humans do—they generate text by predicting what word should come next based on patterns learned from vast amounts of training data. This probabilistic approach means models are essentially performing sophisticated pattern matching rather than retrieving verified information from a knowledge base.

Several factors contribute to hallucinations:

Different types of hallucinations require different detection strategies. Understanding these patterns helps you develop a critical eye when reviewing AI-generated content:

Be especially suspicious of precise statistics, measurements, dates, and quantities. AI often generates plausible-sounding numbers that are completely fabricated. Always verify specific numerical claims against authoritative sources.

Verify that cited sources actually exist. AI models frequently invent academic papers, books, or articles with realistic-sounding titles and authors. Search for the exact citation to confirm it's real.

Check that achievements, quotes, and discoveries are correctly attributed. Models often confuse similar figures or assign accomplishments to more famous individuals in the same field.

Detailed technical specifications, measurements, and product details are prone to hallucination. Cross-reference with manufacturer documentation or official sources.

Verify that timelines make sense. Check that events, publications, and developments occurred in the stated time periods and that cause-effect relationships are temporally possible.

Be cautious of highly specialized or niche information, especially in technical fields. These areas are more prone to hallucination because training data may be limited or the model may mix concepts from different domains.

Certain patterns in AI-generated text should immediately trigger your skepticism. While not every instance of these patterns indicates a hallucination, they warrant additional scrutiny:

"The study, published in the Journal of Advanced Neuroscience on March 15, 2019, by Dr. Sarah Mitchell and her team at Stanford, found that exactly 67.3% of participants showed improvement..."

Why suspicious: The abundance of specific details (exact date, percentage, names, institution) makes this sound authoritative, but each detail is a potential fabrication. Real citations are verifiable; hallucinated ones include just enough detail to sound convincing.

"While there's some debate in the field, most experts agree that the mechanism was definitively proven by the landmark 2018 experiment..."

Why suspicious: The text acknowledges uncertainty then immediately provides specific "facts." This pattern often indicates the model is generating plausible-sounding consensus where none may exist.

"Leonardo da Vinci's experiments with electricity in the 1490s laid groundwork for later developments..."

Why suspicious: This attributes knowledge or technology to a time period before it existed. Models sometimes conflate different historical periods or assign modern concepts to historical figures.

When you need to rely on AI-generated information, especially for important decisions or published content, implement a systematic verification approach:

Understanding AI hallucinations isn't just about catching errors—it's about developing appropriate trust relationships with AI tools. As these systems become more capable and more widely deployed, the stakes of hallucinations increase. Incorrect medical information, flawed legal analysis, fabricated historical claims, and false technical specifications can all have serious consequences.

The challenge is that hallucinations often appear in content that is otherwise accurate. A response might contain 95% correct information with 5% fabrication seamlessly woven in. This makes blanket skepticism impractical—we can't manually verify every AI-generated sentence—but uncritical acceptance is dangerous.

The solution lies in risk-appropriate verification. For casual inquiries and creative applications, hallucinations may be merely inconvenient. For high-stakes applications—medical advice, legal guidance, financial decisions, academic research, published journalism—rigorous verification is non-negotiable.

Developing a healthy relationship with AI tools means understanding their capabilities and limitations. Here are practical guidelines for different contexts:

As AI technology evolves, so do approaches to mitigating hallucinations. Researchers are exploring several promising directions: retrieval-augmented generation (RAG) systems that ground responses in verifiable documents, uncertainty quantification methods that help models express confidence levels, fact-checking integrations that verify claims against knowledge bases, and architectural improvements that better separate knowledge from generation.

However, eliminating hallucinations entirely may be impossible given the fundamental nature of how these models work. The pattern-matching approach that makes large language models so versatile and capable is the same characteristic that leads to hallucinations. Future systems will likely be better at identifying uncertainty and less prone to confident fabrication, but human oversight will remain essential for high-stakes applications.

The key takeaway is that AI hallucinations are not a bug to be fixed but a feature of how these systems operate. Understanding this reality allows us to use AI tools effectively while maintaining appropriate skepticism and verification processes. As these tools become more powerful and more integrated into our information ecosystem, developing hallucination literacy—the ability to critically evaluate AI-generated content—becomes an essential skill.